Three layers of AI privacy

What "private" actually means when you send a prompt to an AI provider

On May 6, Canada's Privacy Commissioner published the results of a joint investigation with the verdict that OpenAI violated federal privacy law and three provincial privacy regimes in the development and deployment of ChatGPT because the vast personal data the company scraped from the public web without consent included health information, political views, and children's data, and they launched ChatGPT knowing the model fabricated facts about real people, operated for years without formal data retention or deletion policies, and until April 2024 used a design pattern the regulators describe as forcing users to give up their chat history in order to opt out of training. OpenAI agreed to a remediation timeline of three to six months.

The ruling is consequential, but it is also late. And in a roughly twelve-month window, every major consumer AI provider (OpenAI, Google, Microsoft, Meta, and finally Anthropic) touting a we-don't-train-on-your-data-by-default policy changed their default setting in the same direction, with the same UX pattern. GitHub Copilot extended the same shift to its Free, Pro, and Pro+ tiers two weeks ago.

The default changed away from user privacy. But, anyway, privacy looks different at deeper architectural layers.

The contractual layer is the outermost

The most common privacy claim across consumer AI is contractual. The provider says, in policy or in a Data Processing Agreement, "we won't use your data for X." X is usually training. The commitment is audited under SOC2 and ISO 27001, exists alongside HIPAA Business Associate Agreements where relevant, and is the basis for what most procurement teams and most consumers think they're buying.

Generally, consumer tiers are by default opted-in to training; business tiers don't.

OpenAI's consumer products train on user content by default. The opt-out lives at Settings → Data Controls → "Improve the model for everyone." A 30-day retention window for abuse monitoring applies even with opt-out. ChatGPT Team, Enterprise, and the API by default do not train; Zero Data Retention is available for Enterprise API customers.

Anthropic's commercial tiers (Claude for Work, Claude Gov, Claude for Education, the Anthropic API including via Amazon Bedrock and Google Vertex AI) do not train. As of September 14, 2025, API log retention dropped from 30 days to 7, the strictest default in the industry. Consumer Claude is what changed in October 2025. Training is now enabled by default, with retention extending to 5 years for opted-in users versus 30 days for opted-out.

Google Gemini Apps consumer trains on conversations by default. Opt-out can be found at myaccount.google.com → Data and Privacy → Gemini Apps Activity. A 72-hour operational retention window persists even when activity is off. Workspace Gemini, Gemini for Google Cloud, and Vertex AI: contractually no training without explicit permission. Workspace Gemini was added to Google's HIPAA BAA on September 30, 2025.

Microsoft Copilot on a personal Microsoft account trains on conversation activity by default for adults 18 and over. Meta AI and Perplexity train by default; Meta's opt-out is narrower because Llama's training data was scraped from public content.

On May 5, 2026, OpenAI announced that Plus and Pro users would begin receiving more personalized responses by drawing on past chats, saved memories, available files, and connected Gmail context. Now that memory is reading email, the surface of what "your data" means in a privacy commitment extends through whatever connectors the user has attached.

Storage models differ between companies. OpenAI and Google use vector-backed memory the user cannot directly inspect. Anthropic uses human-readable markdown files the user can open and edit.

Two weeks before the Gmail-memory announcement, OpenAI shipped an open-weight Privacy Filter for detecting and redacting personally identifiable information in text. The release framing was developer-facing, about making it easier for products built on OpenAI's API to filter PII before processing. The implication, of course, is that the company sees a non-trivial fraction of what flows through its products as containing PII its developers want to redact.

It's worth mentioning that persistent memory plus connected services plus the kind of input volume PII filtering presumes is the prerequisite for ad-supported AI. Today's consumer AI products are ostensibly funded by subscriptions, but the infra to serve targeted advertising in chat is already shipped, so it won't take much for the ad-free model to change. History is littered with enshittified products that begin with "we'll never sell ads" and soon find economic conditions in which they decide to serve a different master: Google search, Facebook, Reddit, Twitter, every consumer-facing platform that ran on "free" subscription or VC runway long enough.

Does any consumer AI product currently have a durable commitment that would prevent them from eventually being remolded around serving highly targeted personalized ads?

The cryptographic floor

The second layer to privacy in AI pertains to how data is stored. Prompts and responses travel under TLS, the same protocol that protects banking sessions and email login. Stored data sits behind AES-256 or equivalent at rest.

What this layer does not do is protect prompts from the provider while inference runs. The model server holds the plaintext during processing, and logs may capture it for the retention windows already discussed. The provider, by contractual commitment, agrees not to look, but the architecture does not prevent looking, and Apple's own PCC announcement frames this as the reason a different architecture was needed: "the traditional cloud service security model isn't a viable starting point" for the privacy guarantees consumer AI deserves.

This is the layer where most consumer mental models stop. "My data is encrypted" feels like a complete answer. TLS in transit and AES at rest defend against external network adversaries, against most third-party interception, and against most operational mistakes that would otherwise expose data in flight. They do not defend against the provider, or court-ordered preservation, or operational mistakes inside the provider's perimeter, or against vendors the provider shares data with.

In-use cryptography

The third layer is the architectural exception. The provider cannot see the prompt even during processing. Almost no consumer AI service operates here.

The cleanest consumer-facing example is Apple Private Cloud Compute, which began rolling out with Apple Intelligence in late 2024 and now handles cloud-routed prompts that exceed local on-device capacity. The architecture: Apple Silicon servers, Secure Enclave, ephemeral processing entirely in memory with no persistent storage, no SSH or remote shell, signed binaries published to a transparency log before deployment, source code for security-critical components on GitHub, and Apple Security Bounty rewards extended to PCC vulnerabilities. Apple itself cannot read the contents of a PCC inference, and no admin tier exists with that access.

Trail of Bits' review called PCC a meaningful step forward in cloud AI security and named where it falls short. PCC is not end-to-end encrypted ML. End-to-end encrypted ML would require fully homomorphic encryption — computing on ciphertexts without decrypting them — and FHE is still impractical at consumer scale. PCC trusts Apple's silicon and Apple's published binaries; the cryptography itself is not end-to-end.

There is a deeper version of this caveat. Confidential computing architectures of all stripes — Intel TDX, AMD SEV-SNP, and the broader category PCC sits adjacent to — depend on physical security of the hardware. The threat models published by Intel and AMD have, since 2020, explicitly excluded on-line physical attacks against the memory bus as out of scope. In October 2025, academic researchers demonstrated under-$1,000 attacks against Intel TDX and AMD SEV-SNP that extract the attestation keys those architectures rely on. The paper presents at IEEE Symposium on Security and Privacy May 18–22, 2026.

There is also a recent complication. In January 2026, Apple and Google announced a multi-year collaboration under which the next generation of Apple Foundation Models will be based on Google's Gemini models and cloud technology. Apple's statement specified that Apple Intelligence will continue to run on Apple devices and Private Cloud Compute, "while maintaining Apple's industry-leading privacy standards." The posture of PCC's stateless, attested cloud is preserved, but the model running inside the PCC envelope is not. For the purpose of this architecture, this matters less than it might seem; PCC's privacy guarantee is about what the operator (Apple) can see, not which model is doing the inference. But it does mean the foundation model behind a PCC-routed Apple Intelligence prompt is increasingly not Apple's.

This level of security in consumer AI is, as of mid-2026, looks like a one-vendor story. Meta has Private Processing, with Trail of Bits findings still partially open a high-severity reproducible-builds finding, an OHTTP de-anonymization issue. Google reportedly engaged NCC Group for an audit of its cloud AI privacy components but has not published source code or made architectural claims comparable to Apple's. OpenAI, Anthropic, and Microsoft do not currently ship this type of architecture for their consumer products.

On-device AI was a nice idea while it lasted

The privacy-strongest path in 2026 is the one where the data never leaves the device. On-device AI eliminates the cloud entirely: no provider perimeter, no contractual layer, no in-use cryptography needed because there is no remote processing.

The models that run on consumer devices today are small. Apple Intelligence's on-device foundation models are roughly 3 billion parameters. Google's Gemini Nano runs at 1.8 to 3.25 billion parameters with 4-bit quantization. These are useful for summarization, smart reply, basic rewriting, on-device search. They are not frontier-class reasoning. For the kind of reasoning that powers Claude Opus 4.7, GPT-5, or Gemini 3 Pro, the model is too large to fit on consumer hardware in any practical form, and the inference is too computationally expensive to run at usable latency without data-center GPU clusters.

This isn't a problem affordability solves. A $1,000 phone can run Gemini Nano. A $15,000 home AI workstation with multiple consumer-grade GPUs cannot run Claude Opus 4.7 or GPT-5 at production latency. Frontier inference at usable latency is currently a rack of H100 or H200 GPUs in a data center, and that's not a configuration that lives in a residential setting at any price point.

The dynamic is also widening rather than narrowing. Frontier models grow faster than mobile silicon, and they grow faster than even desktop or workstation silicon can keep up with.

The most recent operational anchor for the on-device path is Chrome's Gemini Nano deployment. In May 2026, privacy researcher Alexander Hanff documented that Chrome had been silently downloading and installing the 4GB Gemini Nano model on macOS, Windows, and Linux machines without consent prompts. Google's framing was that on-device processing is itself a privacy feature, which it is. Hanff's analysis was that the deployment posture (no notification, ~14-minute background download, model placed in a hidden Chrome user-profile directory) was its own privacy concern.

For users whose AI workload is concentrated in the kinds of tasks small models do well, like summarization, smart reply, basic editing, and on-device search, the privacy posture is genuinely strong. For users whose AI workload involves the capabilities that drew them to AI in the first place, the prompt is still going to a data center, and the framework above is still the operative one.

So what are the options?

There's a case to be made for the Apple stack. iPhone or Mac for the on-device tier; Apple Private Cloud Compute for cloud-routed prompts. It's available only to users on Apple's hardware and within the Apple Intelligence feature set. The threat model PCC defends against is narrow but worth valuing: Apple itself cannot read the contents of a cloud inference. The threat models it does not defend against are physical compromise of Apple's data-center hardware (admittedly a small risk under Apple's operational posture but inherited from the broader confidential-computing category) and any prompt routed to Apple's third-party integrations such as the OpenAI ChatGPT path, which falls under the third party's separate privacy policy.

The second option is for local-only stacks. Ollama, LM Studio, and similar tools run open-weight models entirely on the user's hardware. The privacy posture is the strongest available because nothing leaves the machine, but the capability ceiling is the glaring constraint. The user trades capability for control.

The third case to be made is for anonymizing wrappers. Lumo from Proton, DuckDuckGo's AI Chat, and Brave's Leo all route user prompts through their own infrastructure to underlying models, typically a mix of OpenAI, Anthropic, Mistral, and others, while stripping identifying metadata before the prompt reaches the model. The user is anonymous to the model provider; the wrapper provider sees the prompt but commits contractually not to log or train on it. You're adding a layer to achieve a certain type of anonymization. It aims to address the "I don't want OpenAI to know it was me asking" threat model. It does not address the "I don't want anyone to see this prompt during processing" threat model, which only layer 3 architectures address.

The fourth option, of course, is enterprise contracts. For product builders whose customers' data cannot ride consumer tiers, like healthcare, financial services, legal, regulated industries generally, the major providers all hold the door open for you and offer Business Associate Agreements, Enterprise Data Protection terms, Zero Data Retention options, and contractual no-training commitments. The architecture is mostly the same as consumer-tier but the contract terms are stronger.

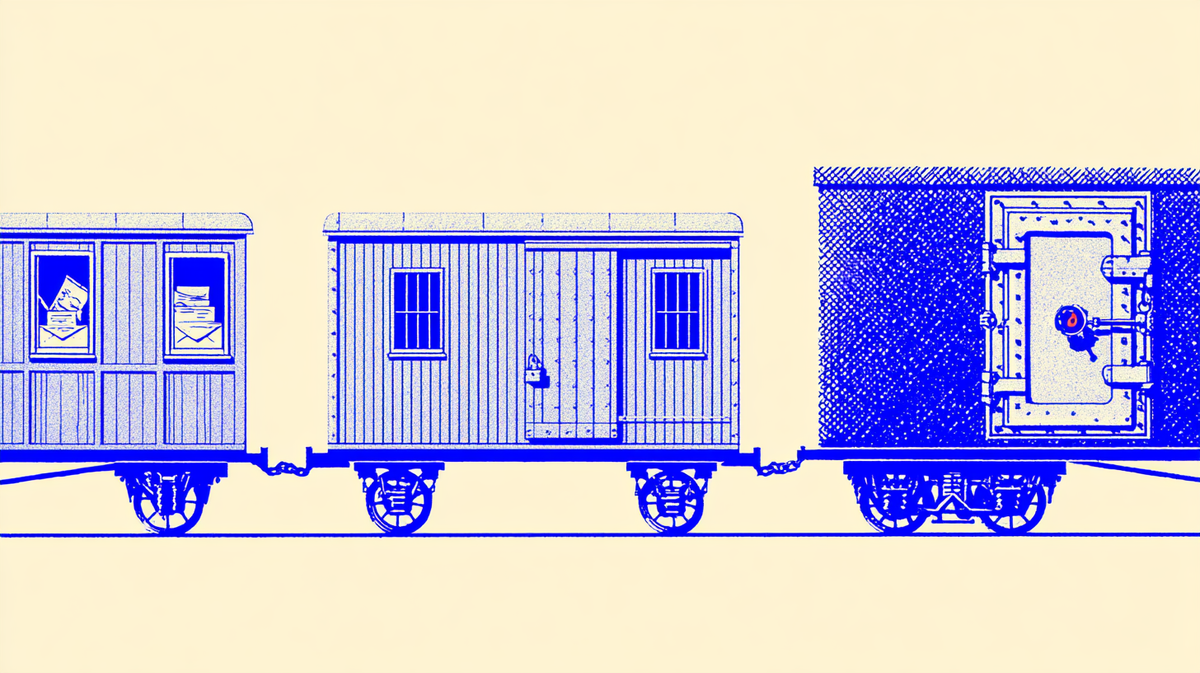

What the opt-out actually buys

Sure, you flip the switch to opt out. At every major provider, opting out of training stops future training. But it doesn't address the rest of the operational reality.

In May 2025, a federal court issued a preservation order in the New York Times' lawsuit against OpenAI, forcing OpenAI to retain consumer ChatGPT and API content indefinitely, including deleted chats, including content that would otherwise have been removed under the standard 30-day window. The order was lifted September 26, 2025. ChatGPT Enterprise was carved out during litigation. For consumer users, the standard retention guarantee was paused for roughly five months by external legal process.

In November 2025, an attacker breached Mixpanel, an analytics vendor used by OpenAI for API customer telemetry. Names, email addresses, and approximate locations of API users were exfiltrated. No chat content was exposed, per OpenAI's notification, but the contractual perimeter only extends as far as OpenAI's own systems.

Through July and August 2025, ChatGPT's "Share" feature, combined with a missing noindex directive and an unclear "Make this chat discoverable" toggle, resulted in thousands of shared conversations being indexed by Google search. xAI's Grok had a parallel incident in August 2025 in which over 370,000 conversations were similarly exposed. Both products subsequently removed the discoverability path. Neither violated a written contractual commitment, but both made layer-1 commitments meaningless for the affected users.

Tenable's November 2025 disclosure of seven ChatGPT vulnerabilities included persistent memory exfiltration, where an attacker's instructions, embedded in a document the user asks ChatGPT to summarize, become entries in the user's long-term memory and execute on every subsequent conversation. Check Point Research disclosed a separate channel in February 2026 where a single malicious prompt could turn a ChatGPT conversation into a covert data exfiltration channel via DNS tunneling out of the code-execution sandbox. OpenAI patched this, but the pattern across both to take note of is that the agentic features that make consumer AI useful, like memory, code execution, web browsing, and file uploads also create channels by which contractual privacy commitments are bypassed without the provider violating them.

Basically, an opt-out only buys "your prompts won't train the next model." It's silent on every other failure mode a user might reasonably worry about.

What this piece doesn't settle

Advertising. Every prerequisite for deeply embedded ad-supported consumer AI is in place. Persistent memory is on by default at every major provider, and now in OpenAI's case reading through to connected Gmail. The historical pattern at consumer platforms is that this is only a matter of time.

Will the security of layer-3 architectures expand beyond Apple? Apple's posture in this category was earned by an Apple Silicon design decision that predates the consumer AI market. Will OpenAI, Anthropic, Google, Microsoft, or Meta ship comparable verifiable architecture for their consumer products?

The on-device limit. As long as frontier reasoning requires data-center compute, the strongest available privacy posture (on-device) cannot host the strongest available capabilities. Quantization, distillation, and architectural advances in small models continue to compress the gap on small-model territory, but whether the frontier itself moves into reach of consumer silicon is a much harder question, and current trajectories say no.